AWS CodePipeline with Bitbucket

At work I needed to deploy an application using AWS CodePipeline but the repository where the code is located is in Bitbucket. This was an inconvenient task because AWS Pipeline does not support Bitbucket, as does AWS Codebuild.

If you do some research on the internet, you can find alternative solutions to this problem, such as having a webhook in the repository that calls a lambda function and generates a file in a bucket and this is the trigger for AWS CodePipeline [1]. Another possible solution is to use Bitbucket Pipelines to create the object to be placed in the bucket, or even mirror the contents of the Bitbucket repository in AWS CodeCommit.

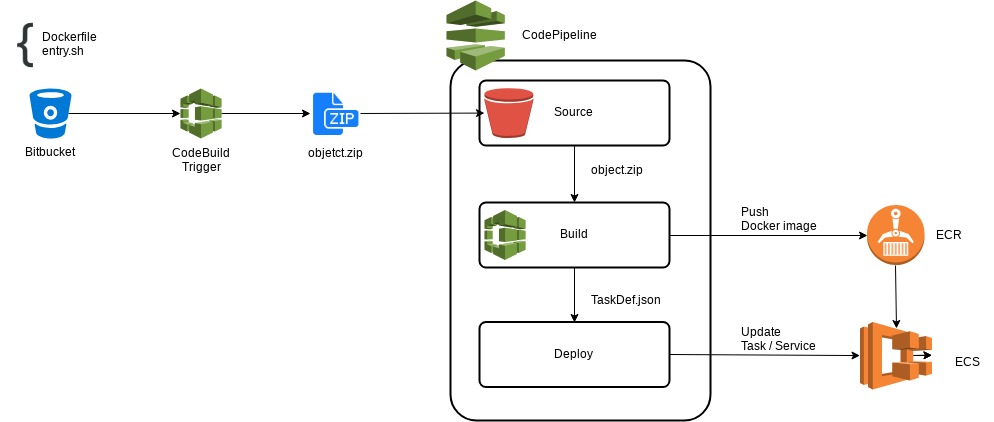

Because these workarounds didn’t convince me, I was thinking about a solution where everything was left on AWS and that I didn’t have to configure repositories, and the key was the support that if AWS CodeBuild has Bitbucket. So instead of using the Bitbucket’s pipelines to generate the source of AWS CodePipeline, with AWS CodeBuild I generate a zip file with the source code of the application which is then used as input.

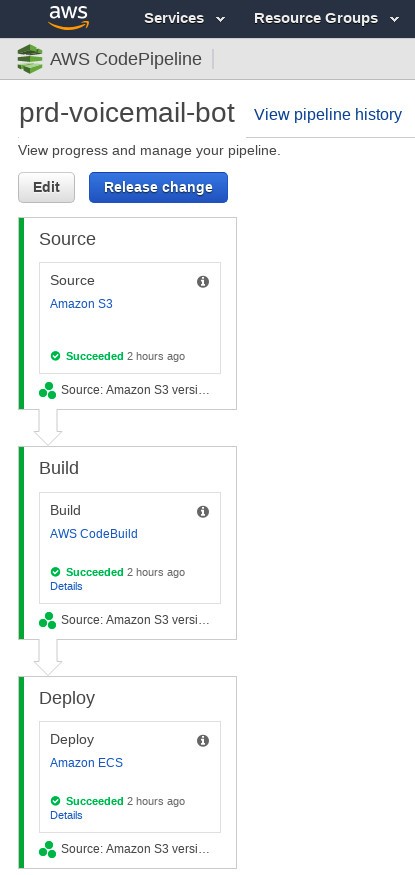

In particular this application is a Docker image that is generated with AWS CodeBuild and then saved in AWS ECR. This CodeBuild generates as output artifact the definition of the ECS task, which is taken as input in the ECS deployment face, updating the service and therefore the application:

Not everything is perfect.

I list a few things to consider with this solution:

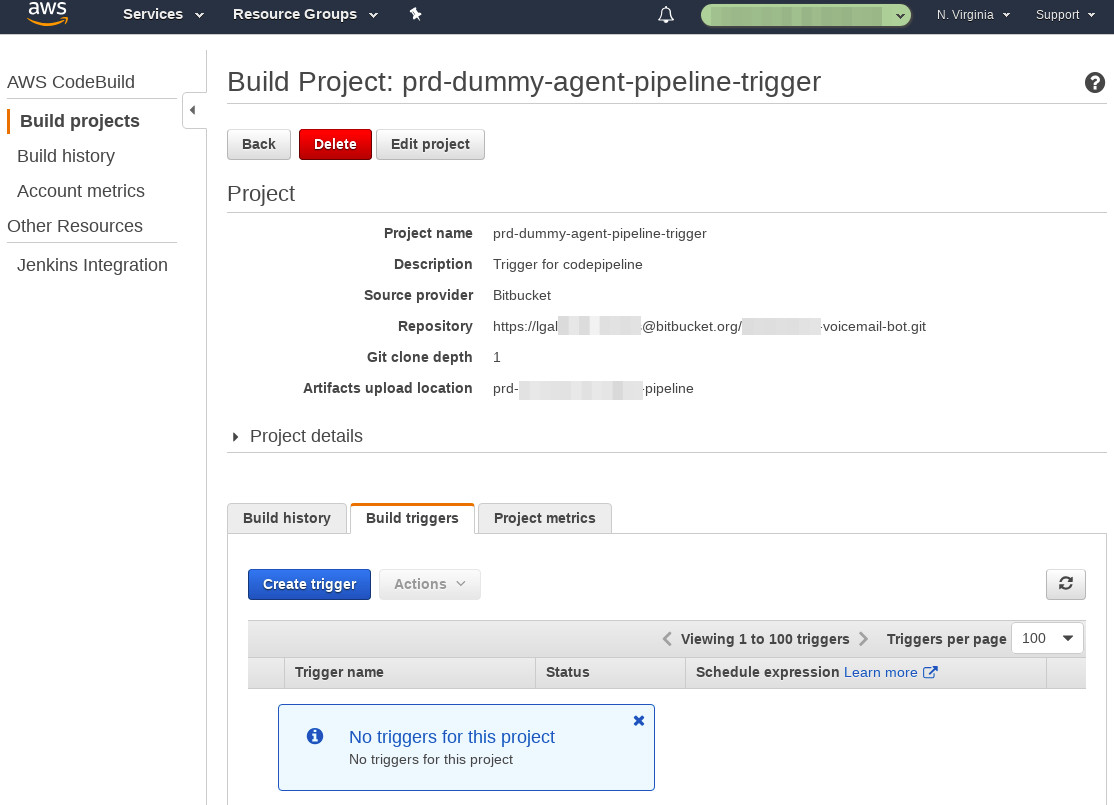

- For CodeBuild to access private Bitbucket repositories, you must authenticate with a user from the AWS web console. If you are considering how to create this release, consider using a service account that has read-only access to the repository.

- The AWS CodeBuild project trigger will not run automatically when you update the repository, so you need to start the project. If you want this to be automatic or as close as possible, you can set up a scheduled task in your project:

Leave a Comment